Autonomous Python engine monitoring job portals, executing semantic filtering, and preventing data duplication. SQLite database, CRON job scheduling (APScheduler), and integrated Telegram channel broadcasting.

Software engineer focused on delivering real value. I transform critical processes and bottlenecks into stable, scalable, and autonomous systems using Python, Docker, Cloud Computing (AWS/Azure), and AI pipelines. I write code designed to survive in production.

A hybrid technical profile (Backend, Cloud, DevOps) forged in resolving critical operational problems.

I am Javier Mosquera, a Software Engineer who prioritises stability, optimisation, and real business impact over over-engineering. My core expertise is backend development and integrating AI into solid cloud infrastructures.

My background managing critical, high-pressure logistics operations gave me something unusual in technical profiles: a sense of urgency, risk anticipation, and extreme tolerance to pressure. When I build a system, I take full responsibility for how it runs in production.

I currently design, deploy, and maintain autonomous bots, REST APIs, and data pipelines using Python and the Docker ecosystem. I deploy infrastructures on Azure and AWS, ensuring high availability and low maintenance.

I operate with full autonomy in 100% remote and asynchronous environments, delivering thorough technical documentation, strict code architecture, and consistently functional iterative deliveries.

I build resilient systems that run 24/7 and recover from failures autonomously.

I reduce human error by transforming slow manual flows into automated pipelines.

I master AWS, Azure, and Docker so the code lives on a scalable infrastructure.

Expert in async communication, technical documentation, and frictionless distributed work.

A unique combination of advanced backend development, cloud systems architecture, and a pragmatic mindset to solve problems without adding technical debt.

Development of robust, decoupled services using Python, FastAPI, and Node.js. Modular code, static typing, and well-documented APIs ready to be consumed at scale.

Transforming critical processes into orchestrated, measurable systems. I use autonomous Python bots, CRON jobs, webhooks, and deep API integrations.

I do not just write code; I ensure its delivery. Experience in containerisation (Docker), deployment orchestration on Azure/AWS, Nginx/Cloudflare management, and continuous monitoring.

Practical use of AI to generate tangible value. Advanced consumption of the OpenAI API, programmatic prompt engineering, and automated NLP in data pipelines.

Applying SOLID principles, clean code, and testing to prevent technical debt from blocking future growth. When building a database, I design it to scale without early restructuring.

Zero friction in distributed environments. I excel through async proactivity, detailed updates to Kanban boards (Jira/Trello), and extensive documentation of my developments.

Applied engineering projects, integrating backend, cloud infrastructure, and automation in real scenarios.

End-to-end design and deployment of web solutions, AI tools, and complex automated systems. Full responsibility from PostgreSQL database architecture to secure deployment using Docker, Nginx Proxy Manager, and Cloudflare.

Backend development and data orchestration to scale internal operational efficiency. Implementation of CI/CD pipelines to automate and secure production deployments on AWS and Azure environments.

Comprehensive management of real-time logistics operations under strict performance KPIs and downtime constraints. This experience forged my pragmatic engineering mindset: focusing on preventing cascading incidents and ensuring operational continuity (SRE mindset).

Not proof of concepts. Real solutions processing data, automating operations, and supporting traffic.

Autonomous Python engine monitoring job portals, executing semantic filtering, and preventing data duplication. SQLite database, CRON job scheduling (APScheduler), and integrated Telegram channel broadcasting.

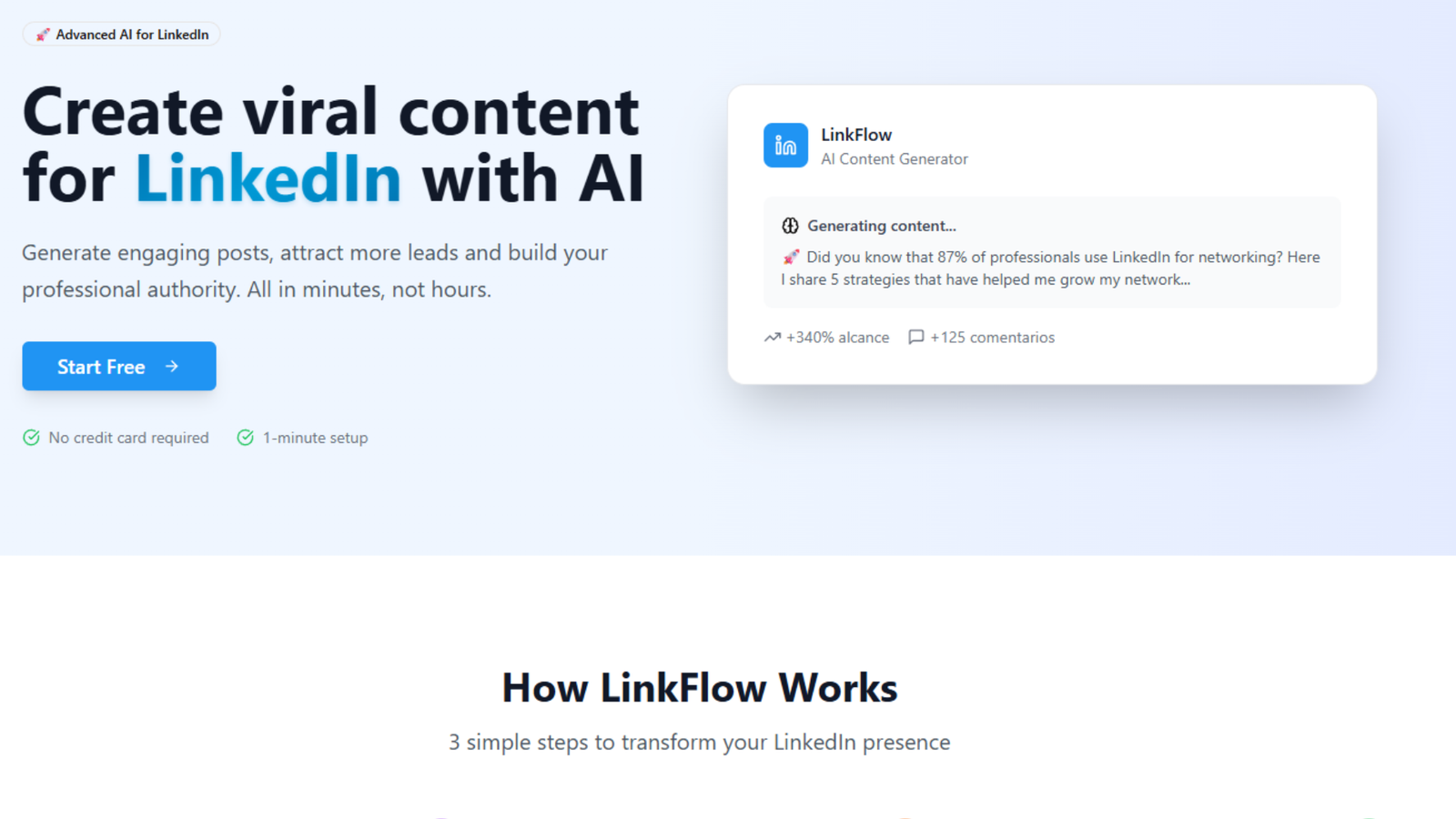

Platform integrating OpenAI algorithms with a robust Node.js and PostgreSQL backend to optimise B2B content. Containerised infrastructure via Docker for simplified horizontal scaling.

Web ecosystem focused on B2B lead capture and retention. Connects frontend to CRMs via webhooks, supported by secure relational databases. Security managed via Nginx Proxy and Cloudflare.

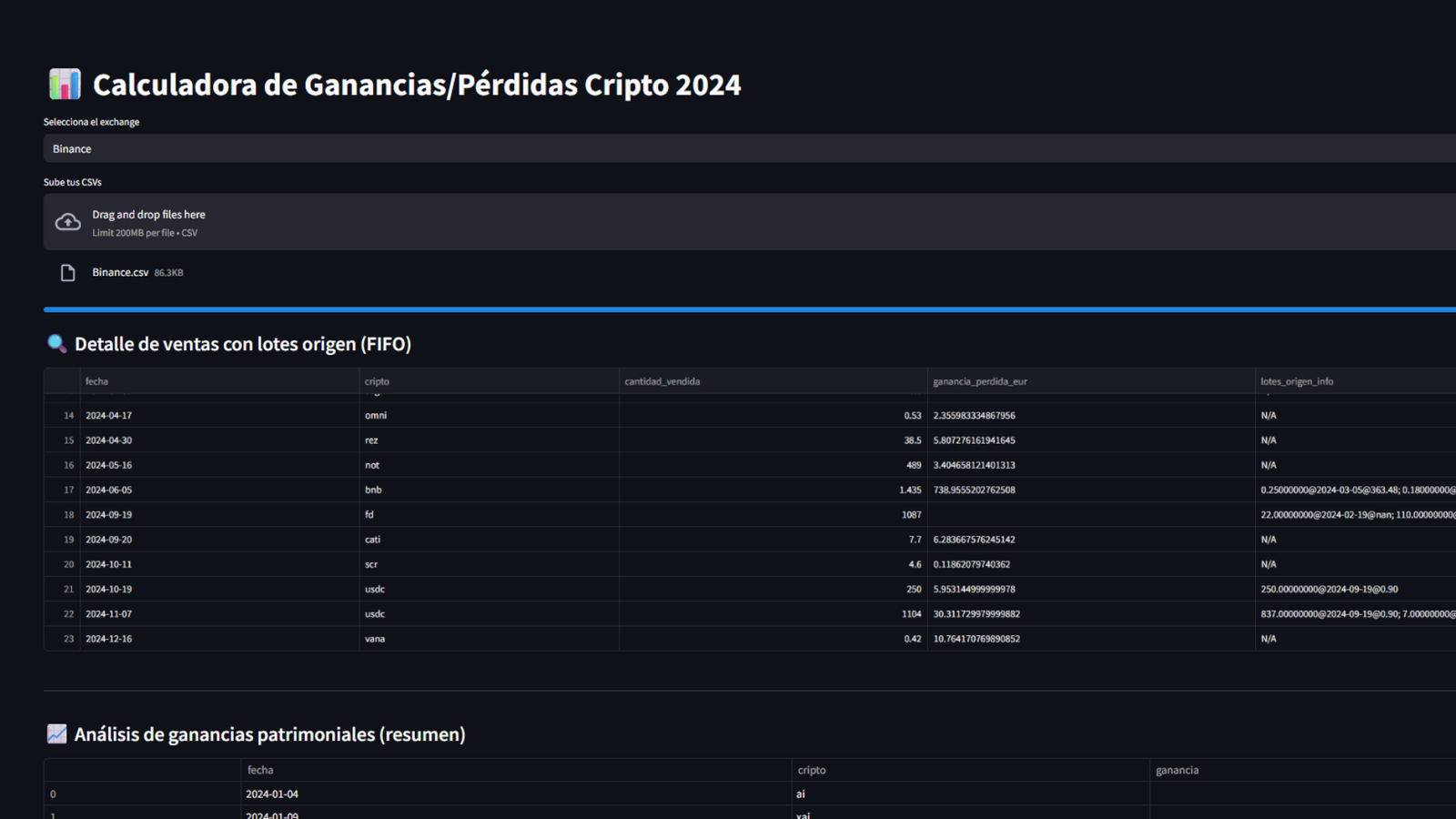

Highly specialised backend tool (Python + Pandas) auditing and normalising thousands of exchange and DeFi transactions, calculating FIFO, yields, and airdrops for strict regulatory tax reporting.

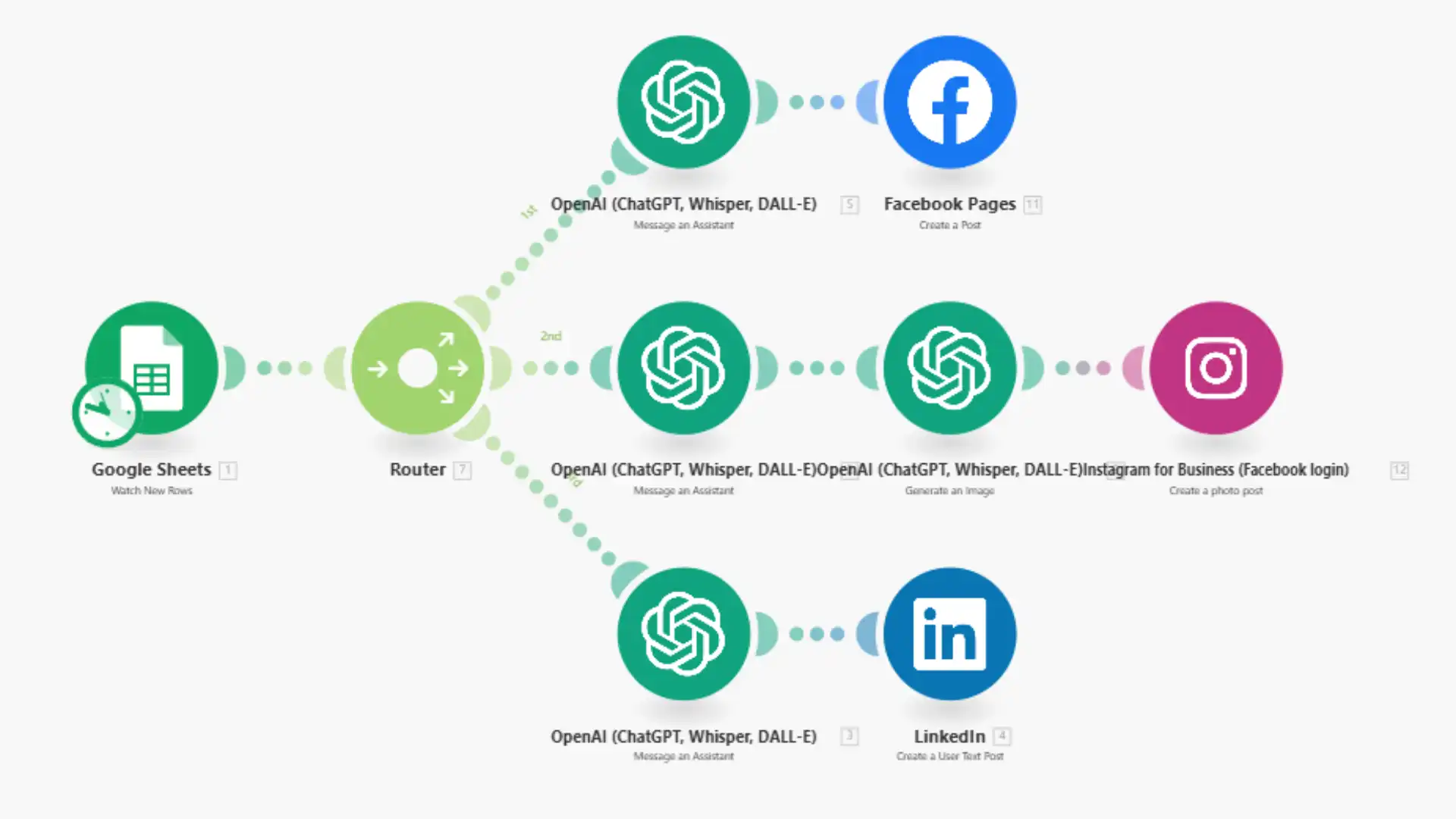

Heavy automation for content departments. Using Make.com and custom Python scripts, it orchestrates the OpenAI API, DALL-E, and Google Sheets API to mass-publish without human intervention.

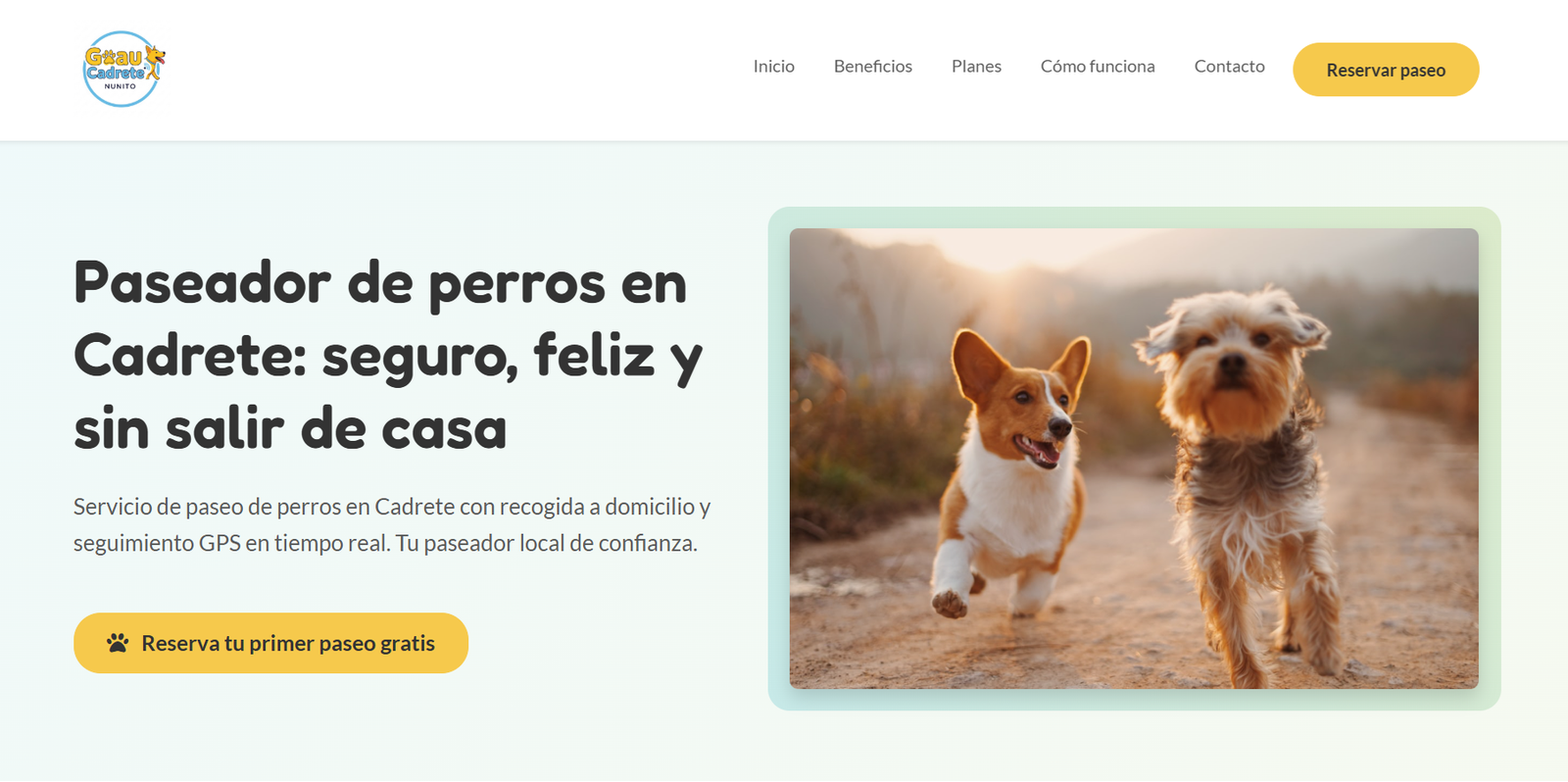

Comprehensive Flask Backend application for B2C booking digitisation. Deployed on ARM architecture (Raspberry Pi), Docker Compose orchestration, and automated SSL control with Certbot.

Tools mastered in real production scenarios, not just in local environments.

Python (Expert)

Python (Expert)

JavaScript

JavaScript

TypeScript

TypeScript

Java

FastAPI

Flask

Java

FastAPI

Flask

Node.js

Node.js

RESTful Architecture

RESTful Architecture

Azure (Certified)

Azure (Certified)

AWS

AWS

Docker & Containers

GitHub Actions / CI/CD

Nginx / Reverse Proxies

Cloudflare Edge

Linux Admin

Docker & Containers

GitHub Actions / CI/CD

Nginx / Reverse Proxies

Cloudflare Edge

Linux Admin

PostgreSQL

PostgreSQL

MySQL

SQLite

Relational Data Modelling

MySQL

SQLite

Relational Data Modelling

Guarantee of technical knowledge aligned with enterprise standards.

In modern engineering teams, writing good code is not enough. I stand out for my ability to work in total alignment with remote agile methodologies, providing stakeholders with continuous updates (clean commits, Jira/Trello reports, detailed pull requests), minimising the need for micro-management or unnecessary synchronous meetings.

I currently have availability to join a new engineering team. If you are looking for a pragmatic technical profile focused on Backend, DevOps, or Cloud architecture for a 100% remote role, I'd love to evaluate a technical fit.